Deeper Dive

My novel techniques, as applied to OccamNet, enable the novel architecture to fit functions with constants, something OccamNet could not previously do, and fit a wider range of implicit equations more effectively. Fitting functions with constants is essential to nearly all applications of interpretable neural networks, and fitting implicit equations is helpful for unsupervised learning and also for modeling many relationships that arise in nature. Thus, by extending OccamNet’s abilities in these two classes of functions, I expanded this new architecture’s scope, rendering a novel approach to interpretable neural networks more effective. In fact, with my new approach, OccamNet is able to fit any class of function, even outperforming the state-of-the-art implicit equation fitting technique on many equations and performing better than the state-of-the-art symbolic regression algorithm across numerous real-world datasets. These results establish my version of OccamNet as a promising candidate in the field of symbolic regression. Importantly, my improved OccamNet architecture is both interpretable and generalizes more effectively outside of the training data range than standard neural networks, therefore contributing to the greater adoption of interpretable and reliable machine learning techniques.

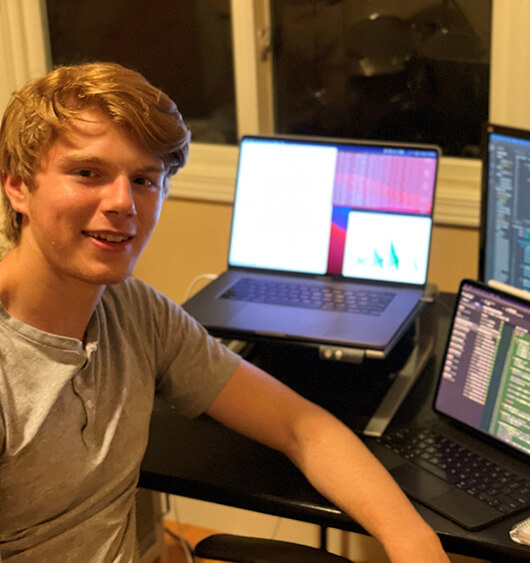

I was drawn to the challenge of neural network interpretability after reading both technical and philosophical papers that detailed the problems of uninterpretable neural networks and the resultant enormous, untapped potential of deep learning. When I was later accepted to the Research Science Institute (RSI) and asked what problem I found most interesting, the answer was clear.

Fortunately, a research group at MIT was working on a novel interpretable neural network architecture (OccamNet) and, seeing my experience with computer science and math, the group offered to mentor me through the RSI program. During RSI (which was conducted virtually due to the COVID-19 pandemic), I made contributions that were significant.